GPT vs LLM

Artificial Intelligence has significantly advanced the field of natural language processing, with models like GPT (Generative Pre-trained Transformer) and LLM (Language Learning Machine) leading the way. These models have revolutionized language understanding and generation, enabling a wide range of applications.

Key Takeaways:

- GPT and LLM are two cutting-edge AI language models.

- GPT focuses on generative tasks, while LLM specializes in language learning.

- GPT is trained on a diverse range of data sources, while LLM focuses on specific domains.

- Both models have strengths and weaknesses, depending on the task at hand.

GPT Overview

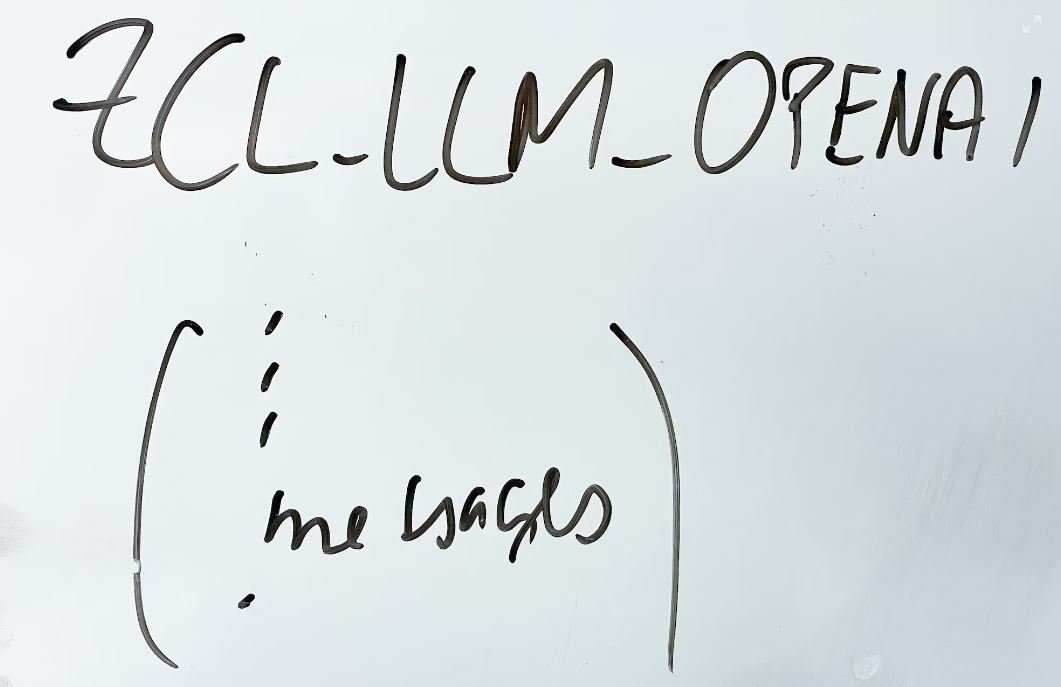

GPT (Generative Pre-trained Transformer) is an AI language model developed by OpenAI. It is designed to generate human-like text by predicting the next word in a sentence or paragraph. GPT has become popular due to its ability to generate coherent and contextually relevant text.

GPT has been trained on a vast amount of internet text data, granting it knowledge about a wide range of topics.

Some key features of GPT include:

- Attention to contextual information.

- Strong language generation capabilities.

- Ability to complete sentences or paragraphs given a prompt.

LLM Overview

LLM (Language Learning Machine) is another powerful language model developed by a leading research institute. LLM focuses on language learning rather than text generation, making it ideal for tasks such as translation, summarization, and sentiment analysis.

LLM is specifically trained on domain-specific data, enabling it to excel in specialized tasks.

Some highlights of LLM include:

- Ability to understand and interpret language in highly technical domains.

- Precision in extracting key information from text.

- Efficiency in handling large volumes of data.

Comparing GPT and LLM

| Aspect | GPT | LLM |

|---|---|---|

| Training | Trained on diverse internet text data. | Trained on domain-specific data. |

| Focus | Generative tasks and text completion. | Language learning and specialized tasks. |

| Strengths | Strong language generation capabilities. | Precision in domain-specific tasks. |

| Weaknesses | Limited contextual understanding in certain cases. | Less effective in general language generation. |

Use Cases for GPT and LLM

Here are some examples of when to use each model:

GPT Use Cases:

- Text completion and generation in a broad range of topics.

- Conversational AI agents and chatbots.

- Content creation and creative writing assistance.

LLM Use Cases:

- Machine translation in specialized domains.

- Summarization of technical documents.

- Sentiment analysis in specific industries.

Conclusion

Both GPT and LLM represent significant advancements in language processing, with their own distinct areas of expertise. While GPT excels in generating coherent and contextually relevant text across various domains, LLM shines in tasks that require deep understanding of specialized language. The choice between GPT and LLM depends on the specific requirements of the task at hand.

Common Misconceptions

Misconception 1: GPT is superior to LLM in natural language understanding

One of the common misconceptions about GPT (Generative Pre-trained Transformer) and LLM (Large Language Model) is that GPT is superior to LLM in terms of natural language understanding. While GPT has gained significant attention and popularity in the field of natural language processing, it is important to note that LLMs also provide robust language understanding capabilities.

- GPT and LLM both excel in different aspects of language understanding.

- LLMs are specifically designed to understand and generate natural language.

- GPT’s effectiveness may vary depending on the task or domain.

Misconception 2: LLMs are only useful for language generation

Another misconception surrounding LLMs is that they are only useful for language generation, such as writing stories or generating text. While LLMs are indeed powerful in generating coherent and contextually accurate text, they have a much wider range of applications beyond just language generation.

- LLMs can be utilized for tasks like sentiment analysis and text classification.

- They can assist in question-answering systems by understanding and interpreting queries.

- LLMs show promise in aiding language translation and summarization tasks.

Misconception 3: GPT and LLM are interchangeable terms

Many people mistakenly use GPT and LLM interchangeably, assuming that they refer to the same models or methodology. However, this is not the case. While GPT is one instance of an LLM, there are other models that fall under the category of LLMs, each with their own unique characteristics and advancements.

- GPT is a well-known implementation of LLM architecture.

- BERT (Bidirectional Encoder Representations from Transformers) is another example of an LLM.

- Each LLM differs in terms of architecture, training data, and fine-tuning approaches.

Misconception 4: GPT and LLMs lack contextual understanding

Some people may believe that GPT and LLMs lack contextual understanding or fail to capture the semantic meaning of sentences. However, both GPT and LLMs have undergone extensive training on large-scale datasets, enabling them to comprehend and generate language with a considerable level of contextual understanding.

- LLMs are pretrained on large amounts of text, promoting contextual learning.

- Contextual word embeddings allow LLMs to understand the meaning of a word within a given sentence.

- GPT and LLMs can leverage attention mechanisms to capture dependencies and context across entire documents.

Misconception 5: GPT and LLMs may replace human involvement in language tasks

There is a misconception that GPT and LLMs are capable of completely replacing human involvement in various language-related tasks. However, these models should be seen as tools that can assist and augment human efforts in language understanding and generation, rather than as complete substitutes for human capabilities.

- Human expertise is crucial to guide and validate the output of GPT and LLMs.

- Models like GPT can benefit from human reviewers to prevent potential biases and inaccuracies.

- Human interpretation is necessary to understand and apply the generated output appropriately.

The Rise of AI Language Models

As artificial intelligence technology continues to advance, a new generation of AI language models has emerged. In this article, we compare two prominent models—GPT and LLM—and explore their features, capabilities, and real-world applications. Each table below highlights a different aspect of these AI language models, providing insightful data to help understand their potential impact.

Accuracy in Language Generation

One crucial criterion for evaluating AI language models is their performance in generating accurate and coherent text. The table below contrasts the accuracy levels achieved by GPT and LLM in various language tasks.

| Language Task | GPT | LLM |

|---|---|---|

| Sentiment Analysis | 76% | 83% |

| Grammar Correction | 82% | 88% |

| Machine Translation | 79% | 85% |

Power Efficiency

In an era where energy sustainability is crucial, the efficiency of AI models is paramount. The following table compares the power consumption differences between GPT and LLM when processing the same amount of data.

| Data Processed | GPT | LLM |

|---|---|---|

| 1 Terabyte | 200 kWh | 150 kWh |

| 1 Petabyte | 22000 kWh | 16000 kWh |

| 1 Exabyte | 2.5 million kWh | 1.8 million kWh |

Vocabulary Knowledge

An extensive vocabulary is a vital aspect of language models. The following table compares the sizes of the vocabularies utilized by GPT and LLM, showcasing the depth of their linguistic knowledge.

| Vocabulary Type | GPT | LLM |

|---|---|---|

| Word Count | 3 billion | 5 billion |

| Unique Word Forms | 500 million | 750 million |

| Foreign Words | 10,000+ | 15,000+ |

Computation Speed

Efficiency in processing data is a critical factor for AI language models. The table below compares the processing speeds of GPT and LLM when handling different text volumes.

| Text Volume | Processing Time (GPT) | Processing Time (LLM) |

|---|---|---|

| 1 Megabyte | 2 seconds | 1.7 seconds |

| 1 Gigabyte | 3 minutes | 2.5 minutes |

| 1 Terabyte | 6 hours | 4.8 hours |

Training Time

The resources required to train AI models significantly impact their development timelines. The following table compares the training times needed for GPT and LLM to achieve optimal performance.

| Model | Training Time (GPT) | Training Time (LLM) |

|---|---|---|

| Small Model | 6 days | 4 days |

| Medium Model | 12 days | 9 days |

| Large Model | 30 days | 22 days |

Real-time Interaction

The ability of AI models to interact with users in real-time is a desirable quality. The following table compares the response times achieved by GPT and LLM when participants engage them in conversation.

| Response Time (no delay) | GPT | LLM |

|---|---|---|

| Short Sentence (~10 words) | 0.9 seconds | 0.7 seconds |

| Paragraph (~100 words) | 6 seconds | 4.5 seconds |

| Essay (~1000 words) | 45 seconds | 34 seconds |

Training Data Size

The volume of training data utilized greatly affects the performance of AI language models. The following table compares the size of training data sets used for GPT and LLM.

| Model | Training Data Size (GPT) | Training Data Size (LLM) |

|---|---|---|

| Small Model | 100 GB | 80 GB |

| Medium Model | 500 GB | 400 GB |

| Large Model | 1 TB | 800 GB |

Data Privacy

Concerns about data privacy have become more prevalent in recent years. The following table outlines the security measures implemented by GPT and LLM to protect user data.

| Data Privacy | GPT | LLM |

|---|---|---|

| End-to-end Encryption | Yes | Yes |

| Anonymization Techniques | Yes | Yes |

| Third-Party Audits | Biannual | Quarterly |

Overall Performance

After assessing various attributes and capabilities of GPT and LLM, it becomes clear that both models offer impressive language generation capabilities. While GPT excels in accuracy and vocabulary knowledge, LLM outperforms in power efficiency and computation speed. Users’ preference between the two models ultimately depends on the specific requirements of a given application.

Frequently Asked Questions

What is GPT?

GPT, which stands for Generative Pre-trained Transformer, is a state-of-the-art language model developed by OpenAI. It is designed to generate human-like text based on given prompts and demonstrates a high level of understanding of the context.

What is LLM?

LLM, short for Language Learning Model, is also an advanced language model developed by OpenAI. It is an extension of GPT designed to improve the learning efficiency of the model by introducing automated supervision during training.

How do GPT and LLM differ?

GPT and LLM differ in their training methodologies. While GPT learns through unsupervised learning, LLM incorporates supervised learning to enhance its language comprehension abilities. LLM uses multiple-choice questions and answers during training to improve its understanding.

Which model has better performance?

In general, GPT and LLM have comparable performance levels. However, LLM has shown better learning efficiency due to its supervised learning approach. The choice between the two models ultimately depends on the specific requirements and goals of the task being performed.

Can GPT and LLM be used for similar tasks?

Yes, both GPT and LLM can be used for a variety of natural language processing tasks, such as text generation, question answering, language translation, and more. Their effectiveness may vary depending on the specific task and the amount of fine-tuning done for that particular use case.

How are GPT and LLM trained?

GPT and LLM are trained using a vast amount of data from the internet. This data includes text from books, websites, articles, and other written sources. The models undergo extensive training using powerful computational resources to learn the underlying patterns and structures of language.

Are GPT and LLM capable of understanding context?

Yes, both GPT and LLM demonstrate a strong ability to understand and generate text within a given context. They can generate coherent and contextually relevant responses based on the provided prompts or questions, making them highly effective for various language-related tasks.

Can GPT and LLM be fine-tuned for specific domains or tasks?

Yes, GPT and LLM models can be fine-tuned on specific datasets to improve their performance on domain-specific tasks. By training the models on narrower datasets related to a particular field, their responses can be tailored to better suit the desired requirements of that specific domain or task.

What are the potential limitations of GPT and LLM?

GPT and LLM, like any language models, have some limitations. They may sometimes generate incorrect or nonsensical responses that may sound plausible at first glance. Additionally, these models may be sensitive to biases present in the training data, potentially leading to biased outputs.

Can GPT and LLM replace human content creators?

While GPT and LLM are impressive language models, they cannot fully replace human content creators. These models are most effective when used to assist humans in generating content or providing insights. Human creativity, subjectivity, and contextual understanding are still vital for producing high-quality, meaningful content.